Companies are going all-in on artificial intelligence right now, investing millions or even billions into the area while slapping the AI initialism on their products, even when doing so seems strange and pointless.

Heavy investment and increasingly powerful hardware tend to mean more expensive products. To discover if people would be willing to pay extra for hardware with AI capabilities, the question was asked on the TechPowerUp forums.

The results show that over 22,000 people, a massive 84% of the overall vote, said no, they would not pay more. More than 2,200 participants said they didn’t know, while just under 2,000 voters said yes.

someone tried to sell me a fucking AI fridge the other day. Why the fuck would I want my fridge to “learn my habits?” I don’t even like my phone “learning my habits!”

Why does a fridge need to know your habits?

It has to keep the food cold all the time. The light has to come on when you open the door.

What could it possibly be learning

Hi Zron, you seem to really enjoy eating shredded cheese at 2:00am! For your convenience, we’ve placed an order for 50lbs of shredded cheese based on your rate of consumption. Thanks!

We also took the liberty of canceling your health insurance to help protect the shareholders from your abhorrent health expenses in the far future

If your fridge spies after you, certain people can have better insights into healthiness of your food habits, how organized you are, how often things go bad and are thrown out, what medicine (requiring to be kept cold) do you put there and how often do you use it.

That will then affect your insurances, your credit rating, and possibly many other ratings other people are interested in.

I wish products followed your lead and had no AI features, 1995 Toyota Corolla :/

I think you’re being sarcastic, but I unironically agree. Cars and fridges can, and should stay dumb, with the notable exception of battery management systems in electric vehicles. That’s the single acceptable use case for a car IMHO.

Oh I absolutely agree, some things don’t need to be “smart”.

Imagine if someone put a microchip in a potato peeler claiming that it would add features like “sensing the amount of pressure applied to the potato to ensure clean peels”. The reason they haven’t done that is that data would only benefit the user, and they can’t think of a way to have it benefit the company’s profit margins.

I think car play is a wonderful feature. My car should absolutely allow syncing up to my phone. I don’t think it should telemetry or anything like that though. But I think internal process monitoring should also be a thing. Display error codes, show me that a tire is low, monitor a battery, etc. but the manufacturer shouldn’t get that info. My car shouldn’t know my sex life, and the manufacturer definitely shouldn’t

- Know when you’re about to put groceries in so it makes the fridge colder so the added heat doesn’t make things go bad.

- Know when you don’t use it and let it get a tiny bit warmer to save a teeny bit of power. (The vast majority of power is cooling new items, not keeping things cold though.)

- Tell you where things are?

- Ummm… Maybe give you an optimized layout of how to store things?

- Be an attack vector on your home’s wifi

- Wait, no, uh,

- Push notifications

- Do you not have phones?

So I can see what you like to eat, then it can tell your grocery store, then your grocery store can raise the prices on those items. That’s the point. It’s the same thing with those memberships and coupon apps. That’s the end goal.

They can see what you like to eat by what you’re buying, LOL. No, not this.

A fridge can give them information on how do you eat.

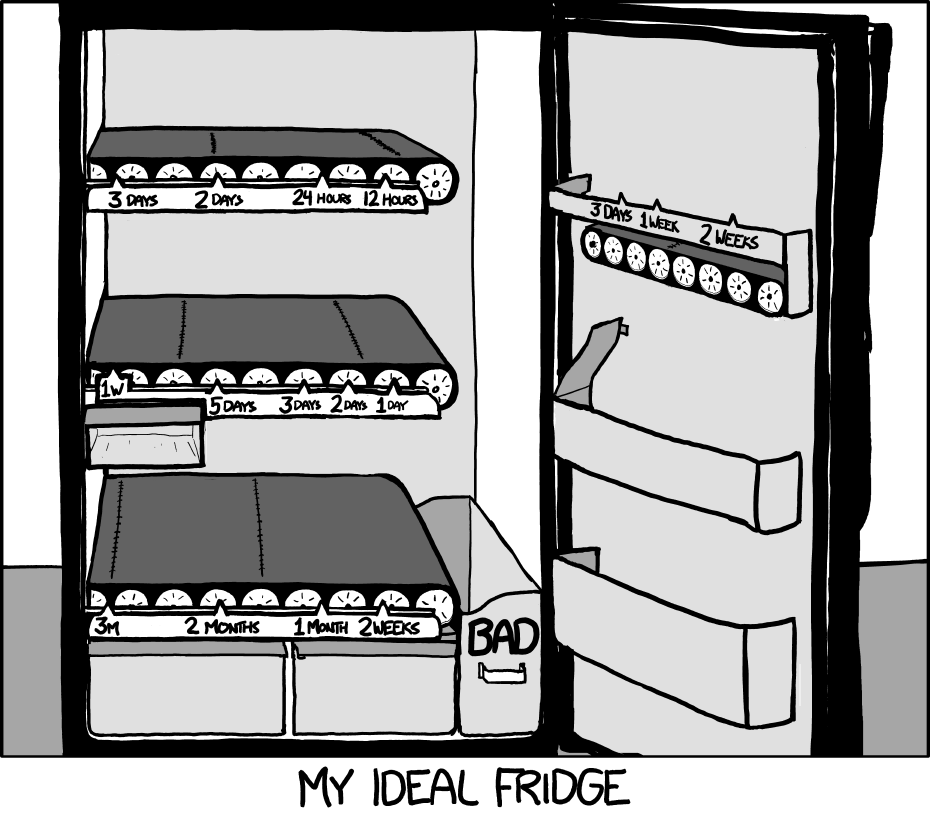

I still want this fridge. (Source)

it doesn’t seem all that hard to make, as long as you don’t mind the severely reduced flexibility in capacity and glass bottles shattering against each other at the bottom

Not to mention the increased expense, loudness, greater difficulty cleaning, and many more points of failure!

Now THIS I could get behind! Still not AI though. it’s a very dumb timer system that would be very useful. 1950’s tech could do this!

always xkcd

And it would improve your life zero. That is what is absurd about LLM’s in their current iteration, they provide almost no benefit to a vast majority of people.

All a learning model would do for a fridge is send you advertisements for whatever garbage food is on sale. Could it make recipes based on what you have? Tell it you want to slowly get healthier and have it assist with grocery selection?

Nah, fuck you and buy stuff.

Exactly, it’s entirely about extra monetization. They all think in terms of hype and money, never in terms of life improvement.

I’d actually love AI to control something like a home assistant setup by learning how I like things and predicting change (mind you I still need to get it set up at all). But most people don’t even want a smart home.

Make something that makes the unpleasant parts of life easier and people will be happy with it

I want AI in my fridge for sure. Grocery shopping sucks. Forgetting how old something was sucks. Letting all the cool out to crawl around to see what I have sucks.

I want my fridge to be like the Sims, just get deliveries or pickup the order. Fill it out and get told what ingredients I have. Bonus points if you can just tell me what recipes I can cook right now, even better if I can ask for time frame.

That would be sick!

Still not going to give ecorp all of my data or put some half back internet of stings device on my WiFi for it. But it would be cool.

Ye, that’d be sick! and that’s also not what was being sold! this fridge did none of that. What exactly made it “AI” I didn’t bother to find out, but I work in IT. I guarantee it wasn’t this. Also, not convinced I want my fridge to be able to spend my money for me. I want to be able to have a Ramen month if I need/want

Automatic spending definitely takes next level of trust for sure!

Absolutely this. There IS a scenario in which I would love a “smart” or “AI” fridge, but it’s gotta be damn impressive to even be worth my time.

It needs to know everything in my fridge, how long it’s been there and it’s expiration date, and I want it to build grocery lists for me based on what is low, and let me know ahead of time that I should use something up that’s going bad soon. Bonus points if it recommends some options for how to do that based on my tastes. And I want to do this without having to manually input or remove everything.

But we’re still SO far from being able to do this reliably, let alone at any kind of acceptable price point, and yet fridge makers keep shoving out dumb fridges with a screen on them and calling them “smart”. I hate it.

For sure playing ads on my fridge or just spying on me aren’t “smart” at all to me.

Would you be willing to destroy the whole planet in order make millions of these fridges?

A couple planets! /s

I would be willing to never have one in my life time just to see climate change slowed to a rate nature can naturally adapt and people can afford to adjust to honestly.

I dont forsee it being any worse then food waste and wasted grocery trips are for me.

Computer vision, a couple services, a db, and network access can be pretty light weight. Any extra voice, natural language interface, etc is probably overkill and without special hardware (and the ecogical cost of that) not worth it on an energy use stand point.

All speculation of course

I’m still pissed about the fact that I can’t buy a reasonably priced TV that doesn’t have WiFi. I should never have left my old LG Plasma bolted to the wall of my previous house when I sold it. That thing had a fantastic picture and doubled as a space heater in the winter.

Projector gang checking in 🤓📽️

Everything alright here?

You can always join us in the peaceful realm of select input.

(there are still WiFi-free options)

what’s the affordable option for daytime viewing with the curtains open?

Audio description.

To remind you when should go to buy groceries haha

Jian-Yang wants a smart fridge. To make you feel bad. Because you’re fat and you’re poor.

…just under 2,000 voters said “yes.”

And those people probably work in some area related to LLMs.

It’s practically a meme at this point:

Nobody:

Chip makers: People want us to add AI to our chips!

The even crazier part to me is some chip makers we were working with pulled out of guaranteed projects with reasonably decent revenue to chase AI instead

We had to redesign our boards and they paid us the penalties in our contract for not delivering so they could put more of their fab time towards AI

That’s absolutely crazy. Taking the Chicago School MBA philosophy to things as time consuming and expensive to setup as silicon production.

This is one of those weird things that venture capital does sometimes.

VC is is injecting cash into tech right now at obscene levels because they think that AI is going to be hugely profitable in the near future.

The tech industry is happily taking that money and using it to develop what they can, but it turns out the majority of the public don’t really want the tool if it means they have to pay extra for it. Especially in its current state, where the information it spits out is far from reliable.

I don’t want it outside of heavily sandboxed and limited scope applications. I dont get why people want an agent of chaos fucking with all their files and systems they’ve cobbled together

NDA also legally prevent you from using this forced garbage too. Companies are going to get screwed over by other companies, capitalism is gonna implode hopefully

I have to endure a meeting at my company next week to come up with ideas on how we can wedge AI into our products because the dumbass venture capitalist firm that owns our company wants it. I have been opting not to turn on video because I don’t think I can control the cringe responses on my face.

Back in the 90s in college I took a Technology course, which discussed how technology has historically developed, why some things are adopted and other seemingly good ideas don’t make it.

One of the things that is required for a technology to succeed is public acceptance. That is why AI is doomed.

AI is not doomed, LLMs or consumer AI products, might be

In industries AI is and will be used (though probably not LLMs, still, except in a few niche use cases)

Yeah, I mean the AI being shoveled at us by techbros. Actual ML stuff is currently and will continue to be useful for all sorts on not-sexy but vital research and production tasks. I do task automation for my job and I use things like transcription models and OCR, my company uses smart sorting using rapid image recognition and other really cool uses for computers to do things that humans are bad at. It’s things like LLMs that just aren’t there - yet. I have seen very early research on AI that is trained to actually understand language and learns by context, it’s years away, but eventually we might see AI that really can do what the current AI companies are claiming.

There’s really no point unless you work in specific fields that benefit from AI.

Meanwhile every large corpo tries to shove AI into every possible place they can. They’d introduce ChatGPT to your toilet seat if they could

“Shits are frequently classified into three basic types…” and then gives 5 paragraphs of bland guff

With how much scraping of reddit they do, there’s no way it doesn’t try ordering a poop knife off of Amazon for you.

It’s seven types, actually, and it’s called the Bristol scale, after the Bristol Royal Infirmary where it was developed.

I know. But I was satirising GPT’s bland writing style, not providing facts

Imagining a chatgpt toilet seat made me feel uncomfortable

Aw maaaaan. I thought you were going to link that youtube sketch I can’t find anymore. Hide and go poop.

Don’t worry, if Apple does it, it will sell a like fresh cookies world wide

Idk, they can’t even sell VR.

Someone did a demo recently of AI acceleration for 3d upscaling (think DLSS/AMDs equivilent) and it showed a nice boost in performance. It could be useful in the future.

I think it’s kind of a ray tracing. We don’t have a real use for it now, but eventually someone will figure out something that it’s actually good for and use it.

AI acceleration for 3d upscaling

Isn’t that not only similar to, but exactly what DLSS already is? A neural network that upscales games?

But instead of relying on the GPU to power it the dedicated AI chip did the work. Like it had it’s own distinct chip on the graphics card that would handle the upscaling.

I forget who demoed it, and searching for anything related to “AI” and “upscaling” gets buried with just what they’re already doing.

That’s already the nvidia approach, upscaling runs on the tensor cores.

And no it’s not something magical it’s just matrix math. AI workloads are lots of convolutions on gigantic, low-precision, floating point matrices. Low-precision because neural networks are robust against random perturbation and more rounding is exactly that, random perturbations, there’s no point in spending electricity and heat on high precision if it doesn’t make the output any better.

The kicker? Those tensor cores are less complicated than ordinary GPU cores. For general-purpose hardware and that also includes consumer-grade GPUs it’s way more sensible to make sure the ALUs can deal with 8-bit floats and leave everything else the same. That stuff is going to be standard by the next generation of even potatoes: Every SoC with an included GPU has enough oomph to sensibly run reasonable inference loads. And with “reasonable” I mean actually quite big, as far as I’m aware e.g. firefox’s inbuilt translation runs on the CPU, the models are small enough.

Nvidia OTOH is very much in the market for AI accelerators and figured it could corner the upscaling market and sell another new generation of cards by making their software rely on those cores even though it could run on the other cores. As AMD demonstrated, their stuff also runs on nvidia hardware.

What’s actually special sauce in that area are the RT cores, that is, accelerators for ray casting though BSP trees. That’s indeed specialised hardware but those things are nowhere near fast enough to compute enough rays for even remotely tolerable outputs which is where all that upscaling/denoising comes into play.

Found it.

I can’t find a picture of the PCB though, that might have been a leak pre reveal and now that it’s revealed good luck finding it.

Having to send full frames off of the GPU for extra processing has got to come with some extra latency/problems compared to just doing it actually on the gpu… and I’d be shocked if they have motion vectors and other engine stuff that DLSS has that would require the games to be specifically modified for this adaptation. IDK, but I don’t think we have enough details about this to really judge whether its useful or not, although I’m leaning on the side of ‘not’ for this particular implementation. They never showed any actual comparisons to dlss either.

As a side note, I found this other article on the same topic where they obviously didn’t know what they were talking about and mixed up frame rates and power consumption, its very entertaining to read

The NPU was able to lower the frame rate in Cyberpunk from 263.2 to 205.3, saving 22% on power consumption, and probably making fan noise less noticeable. In Final Fantasy, frame rates dropped from 338.6 to 262.9, resulting in a power saving of 22.4% according to PowerColor’s display. Power consumption also dropped considerably, as it shows Final Fantasy consuming 338W without the NPU, and 261W with it enabled.

I’ve been trying to find some better/original sources [1] [2] [3] and from what I can gather it’s even worse. It’s not even an upscaler of any kind, it apparently uses an NPU just to control clocks and fan speeds to reduce power draw, dropping FPS by ~10% in the process.

So yeah, I’m not really sure why they needed an NPU to figure out that running a GPU at its limit has always been wildly inefficient. Outside of getting that investor money of course.

Nvidia’s tensor cores are inside the GPU, this was outside the GPU, but on the same card (the PCB looked like an abomination). If I remember right in total it used slightly less power, but performed about 30% faster than normal DLSS.

from the articles I’ve found it sounds like they’re comparing it to native…

We have plenty of real uses for ray tracing right now, from blender to whatever that avatar game was doing to lumen to partial rt to full path tracing, you just can’t do real time GI with any semblance of fine detail without RT from what I’ve seen (although the lumen sdf mode gets pretty close)

although the rt cores themselves are more debatably useful, they still give a decent performance boost most of the time over “software” rt

Which would be approptiate, because with AI, theres nothing but shit in it.

One of our helpdesk told me about his amazing idea for our software the other day.

“We should integrate AI into it…”

“Right? And have it do what?”

“Uh, I don’t know”

This from the same man who came up with an idea for orange juice pumped directly into your home, and you pay with crypto.

And the scary thing is, I can imaging these things coming out of the mouths of people in actual positions of power, where laughing at them might actually get people fired…

orange juice pumped directly into your home, and you pay with crypto

[Furiously taking notes]

who came up with an idea for orange juice pumped directly into your home

That maybe not as cool, but pneumatic city-wide mail system would be cool. Too expensive and hard to maintain, not even talking about pests and bacteria which would live there, but imagine ordering a milkshake with some fries and in 10 minutes hearing “thump”, opening that little door in the wall of your apartment and seeing a package there (it’ll be a mess inside though).

This fucker seriously proposed brawndo on tap. Except perishable. Jesus fucking Christ.

And what do the companies take away from this? “Cool, we just won’t leave you any other options.”

Plenty of companies offering sane normal solutions and make bank in the process

History has shown that not to be the case.

plenty of history to shows it is.

I would pay extra to make sure that there is no AI anywhere near my hardware.

I don’t mind the hardware. It can be useful.

What I do mind is the software running on my PC sending all my personal information and screenshots and keystrokes to a corporation that will use all of it for profit to build user profile to send targeted advertisement and can potentially be used against me.

AI for IT companies is looking more and more like 3D was for movie industry

All fanfare and overhype, a small handful of examples that do seem a solid step forward with millions others that are just a polished turd. Massive investment for something the market has not demanded

It’s just a gimmick, a new “feature” to justify higher product prices.

barely a feature, just a buzzword

I would pay for a power efficient AI expansion card. So I can self host AI services easily without needing a 3000€ gpu that consumes 10 times more than the rest of my pc.

I would consider it a reason to upgrade my phone a year earlier than otherwise. I don’t know what ai will stick as useful, but most likely I’ll use it from my phone, and I want there to be at least a chance of on-device ai rather than “all your data are belong to us” ai

I will be looking into AMD Halo Strix’ performance as a poor man’s GPU to run LLMs and some scientific codes locally.

Any “ai” hardware you but today will be obsolete so fast it will make your dick bleed

it will just be not as fast as the newer stuff

it will just be

not as fasteven more slow as the newer stuff

They want you to buy the hardware and pay for the additional energy costs so they can deliver clippy 2.0, the watching-you-wank-edition.

If you unbend him, clippy could be very useful 🍆📎

God damn you.

Sound advice.

I hate that I understood this

Well, NPU are not in pair with modern GPU. General GPU has more power than most NPUs, but when you look at what electricity cost, you see that NPU are way more efficient with AI tasks (which are not only chatbots).

This is yet another dent in the “exponential growth AGI by 2028” argument i see popping up a lot. Despite what the likes of Kurzweil, Musk, etc would have you believe, AI is severely overhyped and will take decades to fully materialise.

You have to understand that most of what you read about is mainly if not all hype. AI, self driving cars, LLM’s, job automation, robots, etc are buzzwords that the media loves to talk about to generate clicks. But the reality is that all of this stuff is extremely hyped up, with not much substance behind it.

It’s no wonder that the vast majority of people hate AI. You only have to look at self driving cars being unable to handle fog and rain after decades of research, or dumb LLM’s (still dumb after all this time) to see why. The only real things that have progressed quickly since the 80s are cell phones, computers, etc. Electric cars, self driving cars, stem cells, AI, etc etc have all not progressed nearly as rapidly. And even the electronics stuff is slowing down soon due to the end of Moore’s Law.

There is more to AI than self driving cars and LLMs.

For example, I work at a company that trained a deep learning model to count potatoes in a field. The computer can count so much faster than we can, it’s incredible. There are many useful, but not so glamorous, applications for this sort of technology.

I think it’s more that we will slowly piece together bits of useful AI while the hyped areas that can’t deliver will die out.

Machine vision is absolutely the most slam dunk “AI” does work and has practical applications. However it was doing so a few years before the current craze. Basically the current craze was driven by ChatGPT, with people overestimating how far that will go in the short term because it almost acts like a human conversation, and that seemed so powerful .

That’s why I love ai: I know it’s been a huge part of phone camera improvements in the last few years.

I seem to get more use out of voice assistants because I know how to speak their language, but if language processing noticeably improves, that will be huge

Motion detection and person detection have been a revolution in cheap home cameras by very reliably flagging video of interest, but there’s always room for improvement. More importantly I want to be able to do that processing real time, on a device that doesn’t consume much power

None of what you’re describing is anything close to “intelligence”. And it’s all existed before this nonsense hype cycle.

AI camera generation isn’t camera improvement.

When my phone takes a clearer picture in darker situations and catch a recognizable action shot of my kid across a soccer field, it’s a better camera. It doesn’t matter whether the improvements were hardware or software, or even how true to life in some cases, it’s a better camera

Apple has done a great job of not only making cameras physically better, but integrating LiDAR for faster focus, image composition across multiple lenses, improved low light pictures, and post-processing to make dramatically better pictures in a wide range of conditions

That’s nice and all, but that’s nowhere close to a real intelligence. That’s just an algorithm that has “learned” what a potato is.

So… A machine is “intelligent” because it can count potatoes? This sort of nonsense is a huge part of the problem.

deleted by creator

Idk robots are absolutely here and used. They’re just more Honda than Jetsons. I work in manufacturing and even in a shithole plant there are dozens of robots at minimum unless everything is skilled labor.

I might be wrong but those do not make use of AI do they? It’s just programming for some repetitive tasks.

They use machine learning these days in the nice kind, but I misinterpreted you. I interpreted you as saying that robots were an example of hype like AI is, not that using AI in robots is hype. The ML in robots is stuff like computer vision to sort defects, detect expected variations, and other similar tasks. It’s definitely far more advanced than back in the day, but it’s still not what people think.

No, but I would pay good money for a freely programmable FPGA coprocessor.

If the AI chip is implemented as one, and is useful for other things I’m sold.

I think manufacturers need to get a lot more creative about simplified computing. The RPi Pico’s GPIO engine is powerful yet simple, and a good example of what is possible with some good application analysis and forethought.

Problem for the big market is that it’s hardly profitable. In fact make things too easily multipurpose and you undercut your specialized devices opportunities. Why buy a smart device for 500 dollars that requires a monthly subscription when you could get a 100 dollar device with a popular preload of a solution on it?

Like when the WRT54G came out in the day and OpenWRT basically drove Cisco to buy out Linksys to neuter the “home router” to stop it displacing expensive products in the business sector. The WRT54G was the best product for the market, but not the best product to exist for vendor profitablity.

I have few pi pico but i didn’t knew about it, can you please elaborate, because I’ve been using them just like any other esp32 stm32 esp8266 i have

Whichnoart of the pico are you referring to specifically? Never heard the term “GPIO engine” before. Is that sort of like the USB stack but for GPIO?

I think they meant PIO (programmable IO). It’s like a small processor tied to some of the IO pins. There’s a very small set of instructions and some state machines.

It can be used to implement your own IO protocols without worrying about the issues that come with bit-banging from the cpu.The GPIO engine is a simple state machine that can be programmed to implement high-speed data transfer, digital video output, and many other purposes. It is one of the best and most innovative features on the Pico.

Remember when the IoT was very new? There were similar grumblings of “Why would I want talk to my refridgerator?” And now more and more things are just IoT connected for no reason.

I suspect AI will follow as similar path into the consumer mainstream.

IoT became very valuable, for them at least, as data collecting devices.

I feel like the local only devices do have a place in the home automation sector (e.g. home assistant compatible with no cloud integrations).

Most vendors want to lock you into their crappy cloud system that will someday be offline and render things useless however.