- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

The logical end of the ‘Solution to bad speech is better speech’ has arrived in the age of state-sponsored social media propaganda bots versus AI-driven bots arguing back

Shit. I could have told them to just block lemmygrad for like $100 😂🤣😂

I’ll do it for $90!

Sold! $190 to watch to two of y’all fight Russian and Nazi sympathizers. I’m selling this on pay-per-view

i would consider tuning into that, what you charging for a front seat to the action?

Tree-fitty

Best I can do is an upvote.

Please tell me how to block a full instance

Just a reminder, LLMs are not designed to provide truth, but rather naturally sounding word generation.

We can certainly argue over what they’re designed to do, and I definitely agree that’s the goal of them. The reality though is that on some level it is impossible to separate assertions from the words that describe them. Language itself is designed to communicate ideas, you can’t really create language without also communicating ideas, otherwise every sentence from an LLM would just look like

“Has Anyone Really Been Far Even as Decided to Use Even Go Want to do Look More Like”

They will readily cite information that was fed to them. Sometimes it is on point, sometimes not. That starts to be a bit of an ethical discussion on whether it is okay for them to paraphrase information they were fed, and without citing it as a source of the info.

In a perfect world we should be able to expand a whole learning tree to trace back how the model pieced together each word and point of data it is citing, kind of like an advanced Wikipedia article. Then you could take the typical synopsis that the model provides and dig into it to judge for yourself if it’s accurate or not. From a research standpoint I view info you collect from a language model as a step down from a secondary source and we should be able to easily see how it gets to that info.

LLMs are at least a quaternary(?) source. They’re scraping secondary/tertiary sources. As such they’re little better than asking passersby on the street. You might get a general idea of what the zeitgeist is, but how true any particular statement actually is will vary wildly.

Math itself is designed to describe relationships between things. That doesn’t mean you can’t mock up a ‘reasonable seeming’ equation that is absolute nonsense after further examination, but that a layman will take as ‘true enough’.

LLMs don’t cite things. They provide an approximation of what a human might write. They don’t know what they’re writing or how it relates to the ‘real world’ any more than any other centerpiece of a Chinese Room.

After WWII in Germany, the cool young people knew you couldn’t trust anyone over 30.

Nowadays, cool people need to understand that you can’t trust anything bland and sanitized-sounding on the internet. For the rest of our lives, your personhood is on trial with everything you say.

It could tear society apart before we even know it’s happening.

For the rest of our lives, your personhood is on trial with everything you say.

bravo, man really well said

This was why I was so furious about Elon Mask’s blue checkmark debacle. He had a chance to prove that a gigantic part of the internet was a) human and b) non-duplicate. I was really shocked by how badly an apparently smart person fucked it up. Not so smart, it turns out.

EM loses the ability to infuriate you when you understand him as a narcissist.

Nowadays, cool people need to understand that you can’t trust anything bland and sanitized-sounding on the internet.

This is bad news for my communication style.

Moderate erasure

Same but kinda not same

Ah yes, American truths like “Iraq has WMDs and that’s why invading them is the fair and just thing to do,” “abortion is bad for human rights,” “the US isn’t collecting all of your internet traffic because that would be a violation of privacy,” and “this CIA-funded coup of a democratically-elected government will definitely help spread democracy around the world.”

This researcher has built a pro-America AI disinformation machine for $400. I expect that, like most American media, it will start citing “independent think tanks” like Atlantic Council (which, coincidentally, is staffed mostly by ex-US intelligence and receives funding from US intelligence agencies) and use reports gathered by “independent sources” such as the US 4th PsyOps Airborne (which, per their recent recruiting videos, admits to orchestrating large-scale protests including Euromaidan, Tiananmen Square, and others).

Have you seen any tweet this bot generated that would contain misinformation? Because I haven’t.

What is the context for Iraq WMDs? I haven’t seen it anywhere in the article?

Is anyone arguing that, at the time of the Iraq War, it wasn’t considered a “truth” in America that Iraq was developing WMDs and that anything to the contrary was considered disinformation?

So is the bot not pointing out obvious lies with links to factual data or what is your point? Can you link me to an example of bot using shaky arguments?

And the WMD claims stood on shaky legs from very beginning, many countries like Germany opposed use of force in Iraq. Perhaps we’d benefit from bot correcting false narratives in real time had this technology been available at the time.

The bot doesn’t know what’s “real” or not though - it’s a large language model, not a model of the real world. All it knows is what it’s been told in its training data.

For some reason the 5000+ chemical weapons removed from Iraq never seem to count as WMDs.

That’s because they aren’t.

Chemical weapons cause severe agony, but tend to kill a limited number of people.

According to the UN:

Weapons of mass destruction (WMDs) constitute a class of weaponry with the potential to:

-

Produce in a single moment an enormous destructive effect capable to kill millions of civilians, jeopardize the natural environment, and fundamentally alter the lives of future generations through their catastrophic effects;

-

Cause death or serious injury of people through toxic or poisonous chemicals;

-

Disseminate disease-causing organisms or toxins to harm or kill humans, animals or plants;

-

Deliver nuclear explosive devices, chemical, biological or toxin agents to use them for hostile purposes or in armed conflict.

So, they were WMDs

Iraq had those same stores of chemical weapons since the 1980s and was in the slow and arduous process of dismantling them (it had dismantled something like 90-95% of its WMDs by 2003 and was not stockpiling replacements). Given the lack of new production, many of the chemical weapons supposedly in Iraq’s stockpile would have turned harmless due to the short shelf life of chemical weapons.

By and large, people used this imagined idea that Iraq was still developing nuclear weapons as the justification for the invasion. American media ran stories about how aluminum tubes “used for uranium enrichment” were being imported by Iraq. American media brought out Iraqi defectors of questionable credibility who talked about Iraq’s burgeoning nuclear capability. American intelligence claimed that Iraq was actively seeking nuclear weapons development. Of course, all of these claims were entirely false.

By this definition, 9/11 proves that a jumbo jet is a WMD. I don’t know if I can call a jumbo jet a WMD.

9/11 only had its effect because they hit the twin towers, chemical weapons can kill entire areas

I don’t understand the point you’re making. If airplanes hitting a building can do the same damages chemical weapons…

Chemical weapons can kill entire areas just like planes hitting buildings. I’m a licensed pilot.

No, jet didn’t kill millions.

Didn’t need to kill a millions. I’m just saying that the jet hitting a building kills as many as a chemical weapon can.

Chemical weapons not going to kill more people than 9/11.

How the fuck are 14 155mm shells filled with mustard gas from 1980 and a few kilograms of expired growth media going to kill millions of people?

-

What makes you say that this new disinformation machine is pro-America?

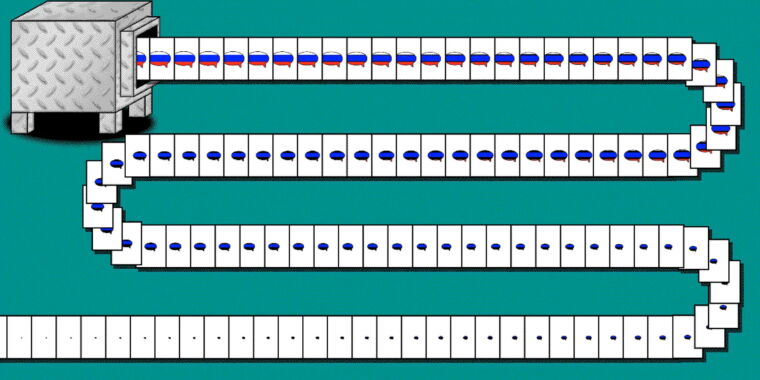

Some actual low-res examples:

That’s way worse than I imagined. Like 400$ seems like to much money spent

This is the best summary I could come up with:

Russian criticism of the US is far from unusual, but CounterCloud’s material pushing back was: The tweets, the articles, and even the journalists and news sites were crafted entirely by artificial intelligence algorithms, according to the person behind the project, who goes by the name Nea Paw and says it is designed to highlight the danger of mass-produced AI disinformation.

Mitigations are possible, such as educating users to be watchful for manipulative AI-generated content, making generative AI systems try to block misuse, or equipping browsers with AI-detection tools.

In recent years, disinformation researchers have warned that AI language models could be used to craft highly personalized propaganda campaigns, and to power social media accounts that interact with users in sophisticated ways.

Renee DiResta, technical research manager for the Stanford Internet Observatory, which tracks information campaigns, says the articles and journalist profiles generated as part of the CounterCloud project are fairly convincing.

“In addition to government actors, social media management agencies and mercenaries who offer influence operations services will no doubt pick up these tools and incorporate them into their workflows,” DiResta says.

The CEO of OpenAI, Sam Altman, said in a Tweet last month that he is concerned that his company’s artificial intelligence could be used to create tailored, automated disinformation on a massive scale.

The original article contains 806 words, the summary contains 215 words. Saved 73%. I’m a bot and I’m open source!

So is it against Russian disinformation, or is does it make anti Russia disinformation? I’d hope the former, it’s easy enough to refute Russia with correct information.

I know it’s taboo but hear me out - you could read the article and find out

Per the article, it’s the latter.

The tweets, the articles, and even the journalists and news sites were crafted entirely by artificial intelligence algorithms, according to the person behind the project, who goes by the name Nea Paw and says it is designed to highlight the danger of mass-produced AI disinformation.

OpenAI is so concerned that AI will do x and y bad thing but still pour all these resources into developing it further.

There are other endeavors where a great deal of the effort is put into making it safe. Space travel for example.

I wish that was the case for AI development. AI safety is a notoriously underfunded, understaffed and still overall neglected field.

OpenAI isn’t responsible for what Russians do with it anymore than any company is for how users use their product

If someone knows that what they’re about to create is going to do harm like this, they shoulder some of the responsibility for those consequences. They dont just get to wash their hands of it as if they had no idea.

Why not. The people who are to blame are the people commuting the act.

The thing itself has no ethical or moral impact until it’s used by a person. I think it feels good to blame an inventor but that’s scapegoating the real culprits. Only way I see your argument making sense is if they intended their tools to be user for unethical reasons.

Because people should consider the pros and cons of what they work on not just pretend that none of the responsibility for those cons is theirs. AI is one of the things that could wipe out humanity. Not in the terminator sense but through unparalleled distruption of the economy and by facilitating a wedge between people through the production of propaganda like none that weve ever seen. i.e deepfakes, personally tailored propaganda etc.

to wipe out humanity

Does it? Doesn’t that threat exist even without AI. At its current state its a glorified chatbot. Get rid of it, we still have every think tank filled with quants, statisticians, social scientists and marketing teams pushing all that propaganda. Its not AI doing it. Its humans.

But AI does have potential to also develop new medicines. New materials. It has potential for a lot more good.

It also has a lot of potential to give people some powerful pocket access to some basic services they normally wouldn’t have. Imagine an AI trained to help people sort out their finances. Act like an r/askdocs. Help with questions about new hobbies.

So where you see panic, other people see hope. And it isn’t the inventors job to tell you or others how to use something.

If we destroy ourselves with every bit of advancement then we deserve it. It would be an inevitability.

Does it? Doesn’t that threat exist even without AI

Yes. Your point?

That AI isn’t the issue

Not to mention that even if one inventor decides not to release their creation, eventually someone else will make something similar.

Would you say then that our efforts to hinder access to dangerous information aren’t working?

In that case, would you object to the posting of detailed schematics on the internet for the creation of nuclear weapons?

No I wouldn’t. In general I’m oppesed to any hiding/censoring of information

That concern is feigned, for PR.

The incentives to continue development are far too great; if one firm abandons the project, that just means that AI will be developed by a less ethical firm. This is why arguing that AI is bad in-and-of-itself is a moderately effective way to reduce the ethics of the average AI developer.

So this is why Elon is suddenly more upset than usual about bots

The Federal Election Commission has said it may limit the use of deepfakes in political ads.

Any use of deepfakes should serve as immediate disqualification/termination for any political candidate, and any donations immediately reversed.

That’s too easy. Run a Deepfake for the other guy. Instead dissolve whatever organization ran it. And if that’s someone’s campaign then so be it.

Honestly, if you look at it in a vacuum, this looks pretty similar to what the other side is doing.

It’s a bot that draws from its own side’s narratives and pushes that line.

Take away Russia from the picture and think about how often our media pushes a spin on other subjects that isn’t exactly the truth.

Doesn’t look so much like “social media propaganda bots versus AI-driven bots arguing back” as much as propaganda bots on both sides spewing whatever their masters want us to see.

Never mind the fake news for a second. Which side has the most reliable real news?

You can’t take away Russia from the picture, because the fact that the bots are arguing against misinformation while using the truth is salient.

Great, now take the same freedom fighter bots and tell them to argue IP policy on social media online. We can hear all about the right minded ways to think about intellectual property and how all the comments around here are misinformation.

It’s like people lose their minds when you throw an enemy into the sentence. I don’t think these people crafting propaganda bots are heroes, even if they are on “my” team. Go down this road, and you can throw away forums like Lemmy, it’ll just be bots arguing with bots.

Not to mention, it’s very probable there not on the side of truth, but rather more propaganda.

Please quote me as to where I called bot programmers heroes

For the record, I don’t particularly like bots of any kind. That being said, troll farms are obviously malicious and negative as well, and far more pernicious than bots designed to make counter arguments to those troll farms. Context matters, and if social media orgs - including Lemmy - can’t find a way to combat troll operations, then I don’t see the further harm in someone boring out the truth to combat vicious propagandists out to apologize for fascists.

Hm. Yeah, banning bots would be better, but it would be more expensive than fighting troll farms with AI. That is valid. Still, I consider AI counter arguments a temporary solution.

The best solution is organized and effective administrative enforcement, but neither reddit nor Twitter or Facebook are interested in doing that, and lemmy is incapable of doing it even if they wanted to

deleted by creator

Yes, that’s my point. There’s no capability within Lemmy to effectively screen out bad actors. It’s all dependent on volunteer admins, and when you’re trying to play whack a mole with malicious instances and people bouncing their accounts around between legitimate instances, it becomes basically impossible.

Not saying that the fediverse is a bad idea. I like it. But this is a key potential downside, and if lemmy and other fediverse clients become popular enough, we will see widespread botting, and it will be an issue.

Only $400? Where can I buy one?

deleted by creator