- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

So it’s a fancy proxy to existing AI offerings?

It’s a way to run models on your local machine and provide an API that’s compatible with OpenAI that can be used by apps that normally rely on that.

Hm so it downloads fixed models and works without an internet connection? Interesting.

Right, you can download any publicly available model and run it without using the internet. Caveat is that you do need a relatively fast machine to make it performant.

For reference the oldest card I have that Vulkan supports is an RX 560 that I bought in 2017 (I’m on GNU/Linux w/ amdgpu and the RADV mesa driver aka. “The Default”). Most medium models on it run at around 6 - 10 Tokens/s. Some crawl to below 6 Tokens/s though and become slower the longer the answer they output is, probably because parts of the model is in RAM since that card has “only” 4GB of VRAM. Models that fully fit in VRAM are a lot faster.

I can run Qwen 2.5 Coder 14B Q4_k_m on CPU at only a little above 1 t/s but it’s worth it when I just want to have it look at whatever code I have without disclosing it with corporations that don’t have my best interests in mind.

what is the advantage of doing something like this? i am a layperson

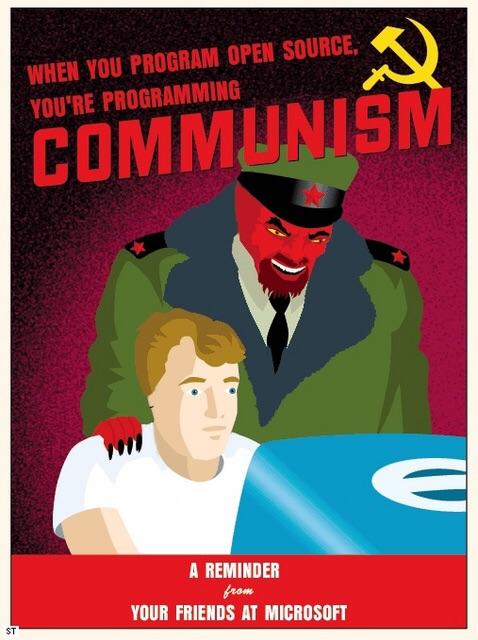

Privacy and ability to generate content you want. Using commercial services like OpenAI means your data is sent to their servers, so anything you query is known to the company, and their models are often restricted in terms of content they will allow you to generate. For example, Google’s Gemini will refuse to deal with many political subjects.

is there a guide how to use this? i downloaded the zip file but i have no idea what to do with it

Oh haha it’s a bit tricky if you’re not technical, the easiest way is to use docker, but that’s a whole thing of itself. If you want just an app you can run, one of these is easier to get going with

Thanks for the tips, I will check those out

Why should I use this instead of Ollama? Ollama is considered the local AI standard and is supported by a ton of other open-source software. For example, you can connect Ollama with the Smart Connections plugin for Obsidian, which lets you chat with and analyze your Obsidian notes.

This one is multimodal and can generate images.

You can run multimodal models like LLaVA and LLaMA on Ollama as well.

The AI models are coded and used in a way that makes them basically platform-agnostic, so the specific platform (Ollama, LocalAI, vLLM, llama.cpp, etc.) you run them with ends up being irrelevant.

Because of that, the only reasons to use one platform over another are if it’s best for your specific use case (depends), it’s the best supported (Ollama by far), or if it has the best performance (vLLM seems to win right now).

Ah gotcha, I thought Ollama was text only