cross-posted from: https://lemmy.intai.tech/post/25821

u/Alphyn

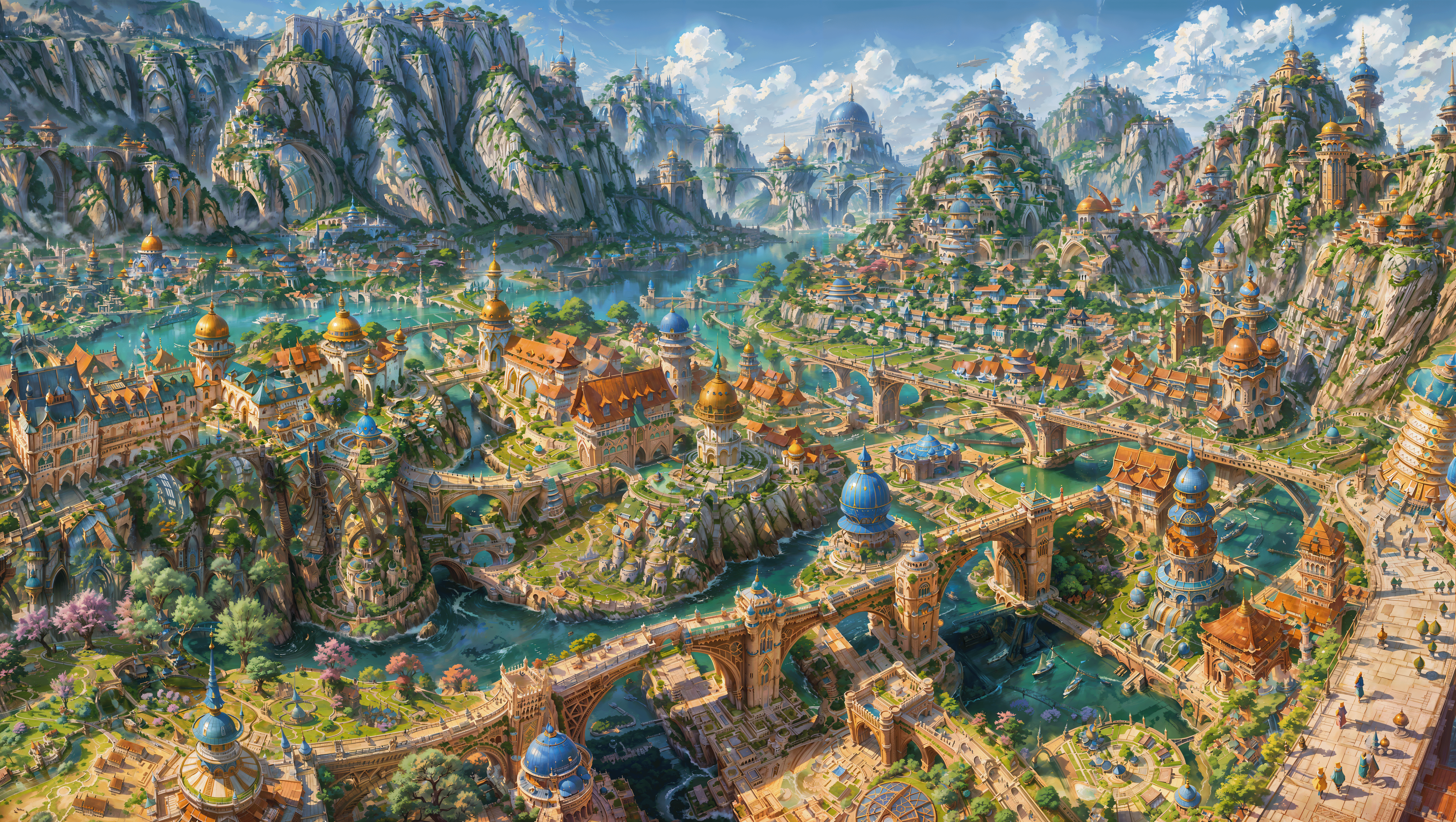

The workflow is quite simple. Just load a pic into img2img. Use the same size as the original image, enable the tiles controlnet. Set a high denoise ratio. Run it, maybe feed it back and run it a couple of times more. Then enable ultimate SD upscale, set the ratio to 2x and run it again. Then accidentally run it again. Naturally, you put the result of each run back into img2img and update the picture size. The model is RPGArtistTools3.

Oh yes, terrible indeed. Saved.

The final image is actually quite cool! But yes, I think we could use a LoRa to direct the granularity of the details being generated, which is progressively scaled down as we upscale to normalize the generation a bit. This would allow to keep higher denoise ratios and avoiding the “fractal” generation.

It’s looks amazing as long as you don’t zoom in tbh

very cool! would be cool if you could add variation to the areas (like a market, port, forts, different architecture, etc) I guess it could be done by combining different images from different prompts.

wow, that’s actually really cool. Wish I could find some good tutorials on how to use controlnet, especially for poses. Getting real sick of the same 5 poses over and over again, and yet if I try to specify “arm across chest”, my character drops her arms and faces straight towards the camera.

I have two ways to do that:

- I bought few dolls specifically for that. I just take shots with my camera and use them in controlnet.

- If you lean the basics of blender, there are posable models available which will generate the skeleton, and depth map for hands and feet automatically. The advantage is that those are not reconstructed from the image, but exact, which avoids errors of misclassification.